Get Metadata activity - used to get files to process

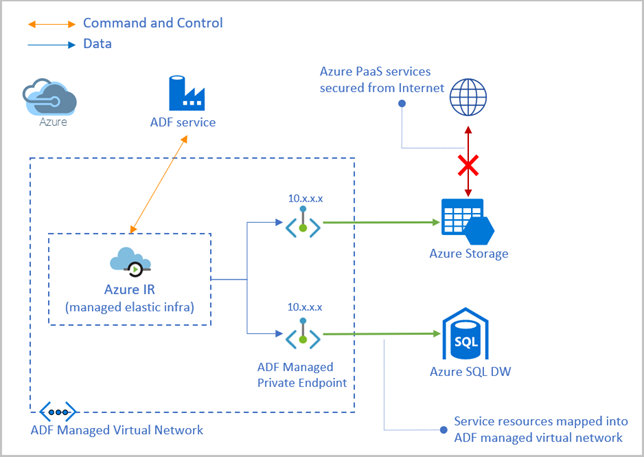

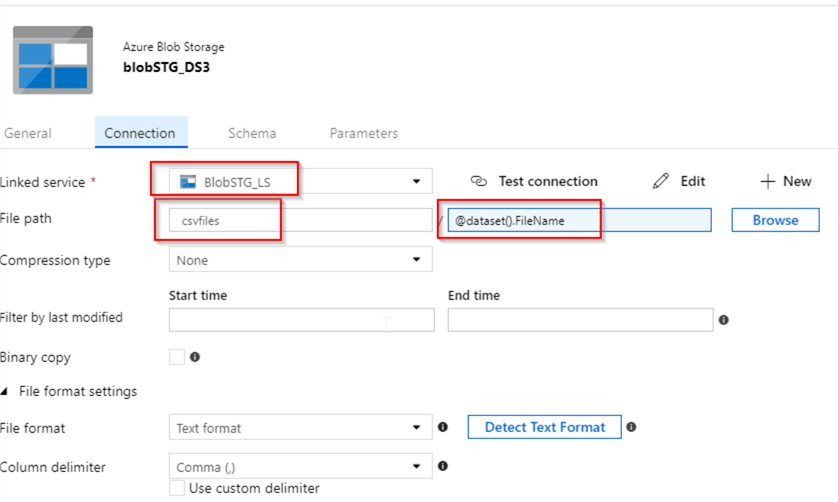

The first activity, Get Metadata is used to get a list of all the files using the file prefix. Next, I added two activities to the pipeline. One was for the name of the webhook source ( WebhookSource) and one for the FilePrefix to use. Once that’s created, I created the pipeline copyWebhookSource. This is called a linked service: Linked connection to Azure Blob Storage account The first step was to create a connection to the Azure Blob Storage resource where everything resided. execute pipeline runAllFilePrefixes & pass in the prefix to useĬreating the inner loop “copyWebhookSource” pipeline.for each item in an array ( the array contained file prefixes).I had to go even further on one container and two two characters in the filename prefix. So, I created my own partition of 36 groupings for each container: instead of “for each file”, I had to get a subset of files who’s names started with 0-9,a-z. Apparently ADF doesn’t like working with more than 1M in a collection. The first time I ran the above, I keep running into timeouts and limit errors. copy the file to the new location, using the specified timestamp to generate the nested structure.open the file and get the timestamp property.I wanted to move all contents into new containers but use the event date, indicated with a timestamp property within each JSON file, into a folder partition using the scheme /YYYY-MM-DD/HH/.json. Recall I have multiple containers, each with millions of JSON files in them. Overview of the solution & processīefore diving in, let’s take a look at an overview of the solution and process. This blog post will explain how I figured this out. Unfortunately, I found it much harder to figure out as the docs tutorials and docs for ADF had some significant gaps, and ot make matters worse there weren’t too many people who had the same questions I had in various communities. I realized moving the data to this new partitioned setup would take time, so I did what so many others do: I decided it’s not critical and put it off.Īlways wanting to learn something new, I decided to use Azure Data Factory (ADF) pipelines to move this around. The webhook post files would go into each of these buckets depending when they were submitted. A new folder would be created for each day and within that, there would be 24 folders, one for each hour of the day. This is a common pattern to follow that many tools and systems understand. A few months later I tried to work with this data but quickly had the realization: “I can’t do anything with this as the datasets are too big… I should have partitioned the data somehow.” What I should have done was setup a folder structure where I used the timestamp of the webhook submission to create the following partition: /YYYY-MM-DD/HH/.json Later, I took a few days to update the Azure Function to process the data and send it to it’s final location.īut I was left with a problem: multiple containers with millions of JSON files in the root of each container. So, during this time, I took the easy way out: the Azure Functions just saved the raw webhook payload to one of a few Azure Storage Blob containers (one for each SaaS source). I didn’t have time to build the process that would actually use and store the data in the way I needed it, but I knew I didn’t want to ignore this time.

A few years ago, over the span of 10 months, I had webhooks from various SaaS products submitting HTTP POSTS to an Let me first take a minute and explain my scenario. To move millions of files between to file-based stores (Ĭontainers) but using a value within the contents of each file as a criteria where the file would go be saved to. It’s situations like these that interest me because not only do i want to figure it out, but I want to write about it as well to help others who may run into this. I didn’t think the scenario was all that unique, but I could find not a single article or post on how to solve this problem. Recently I ran across a scenario and found myself coming up empty in looking for resources on how to solve it.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed